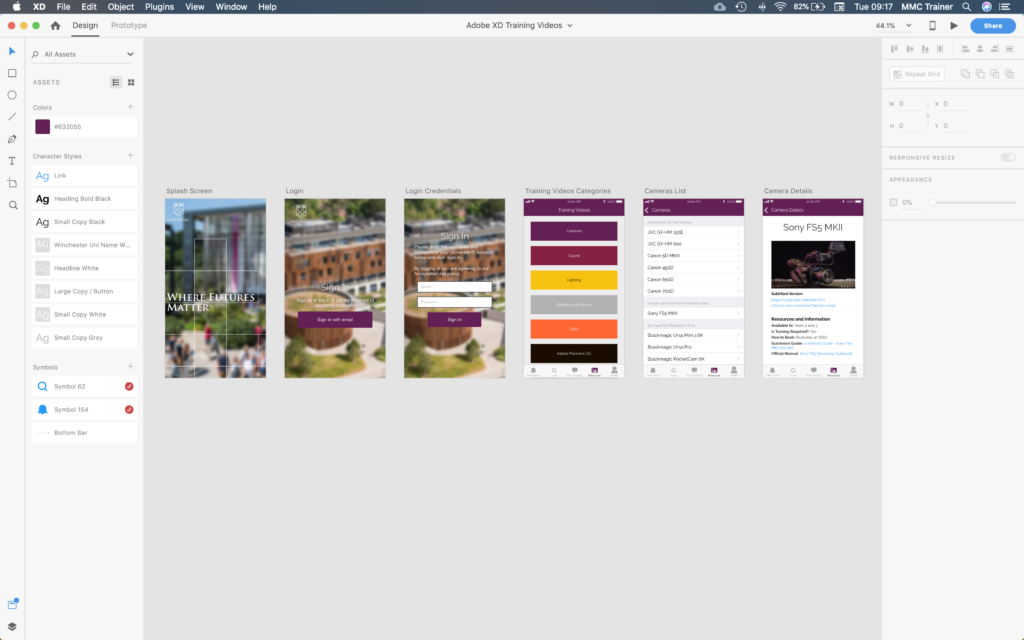

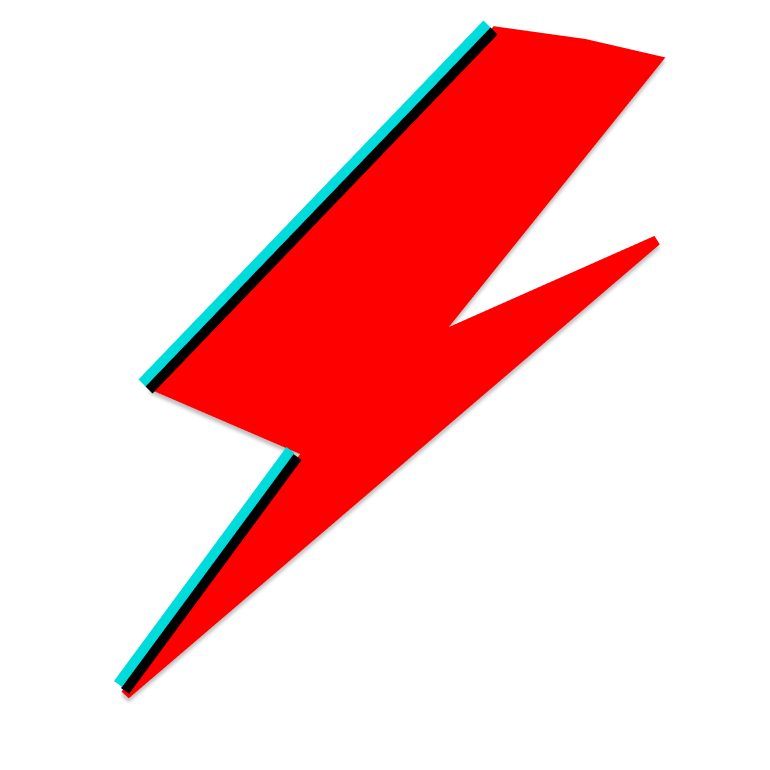

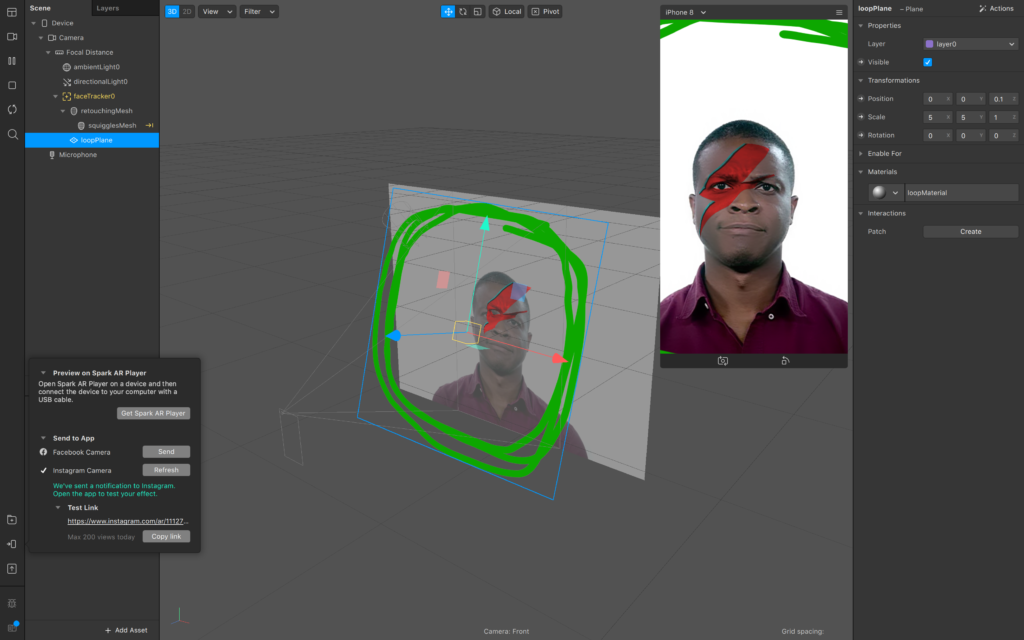

In this week’s lecture we experimented with producing Facebook filters by using a combination of software packages – Figma and Spark AR Studio.

Despite having an awareness of filters for mobile applications such as Facebook, Instagram, and Snapchat, I’d never given thought to creating them myself; this week’s experiments mostly surprised me by how accessible they are to consumers. Although a computer with reasonable specifications is required, no part of the process required intense resources, and all interactive elements were fairly intuitive.

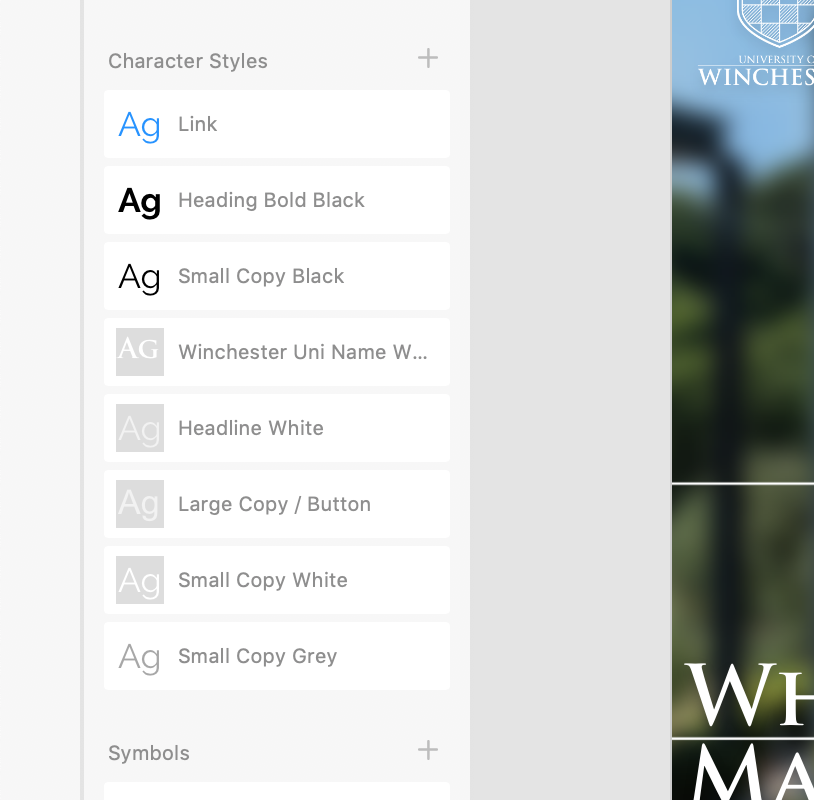

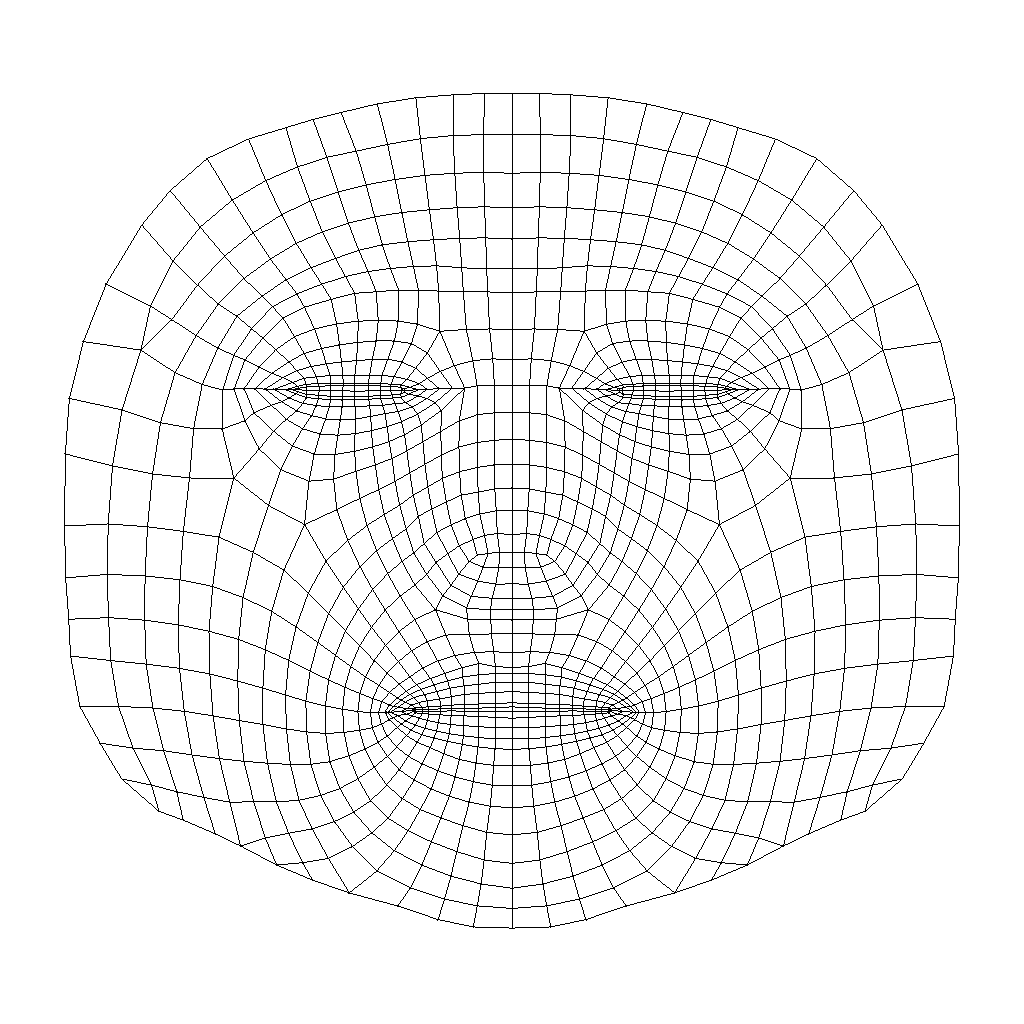

We used Figma purely for its online image-creation abilities. For example, we uploaded a face mesh texture and used this as a map to draw upon. In my experiment, I tried to replicate David Bowie’s Ziggy Stardust face paint.

Spark AR Studio required us to input these meshes (minus to mesh texture), and then map this onto a face. Moving previews of real people (with differing face shapes, hair, and skin colour) were available for modelling your outcome.

You could also add invisible planes around the faces of these models. I’d imagine this would greaten the possibilities of this software – with some extra illustration and animation skills, perhaps someone could produce birds flying around a model’s head?

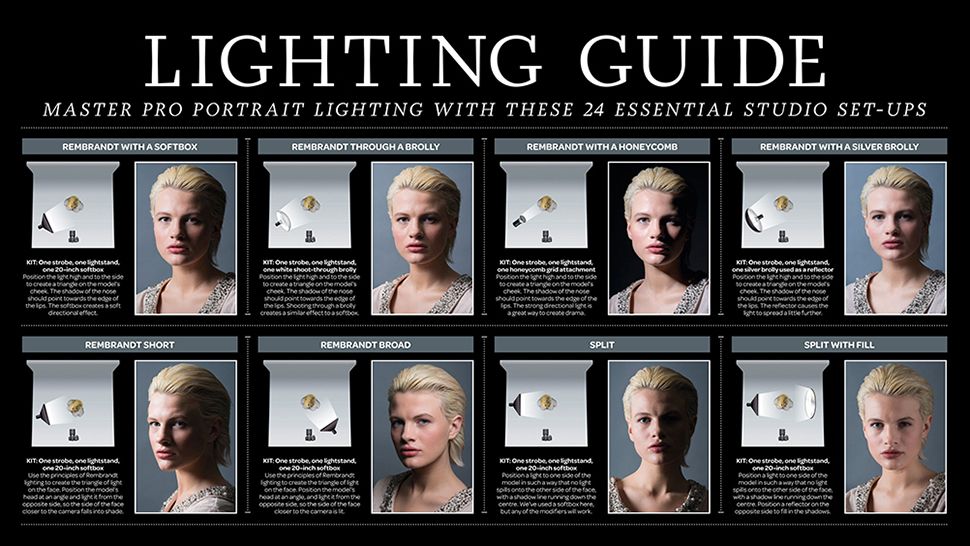

In relation to my own studies, I could imagine using these filters to demonstrate how lighting setups can produce a variety of different outcomes – perhaps as a replacement for the Lighting Guide posters that adorn walls of photography studios in many sixth form colleges. This would also negate the need for any lighting equipment, or even the presence of students in the same room as the teacher. This could be useful for online teaching, especially if the physical aspect of setting up lights could be demonstrated using a different software solution.